Executive Summary¶

This project presents a full-stack machine learning solution to forecast monthly product-level sales for SuperKart, a fictional multi-store retail platform. The objective is to empower SuperKart with data-driven insights to improve business planning, inventory allocation, and supply chain efficiency across diverse store locations.

The notebook walks through the complete ML lifecycle — from data exploration and feature engineering to model development, hyperparameter tuning, and deployment. Two regression algorithms — Random Forest and XGBoost — were evaluated, with the final tuned model achieving strong predictive performance on unseen data.

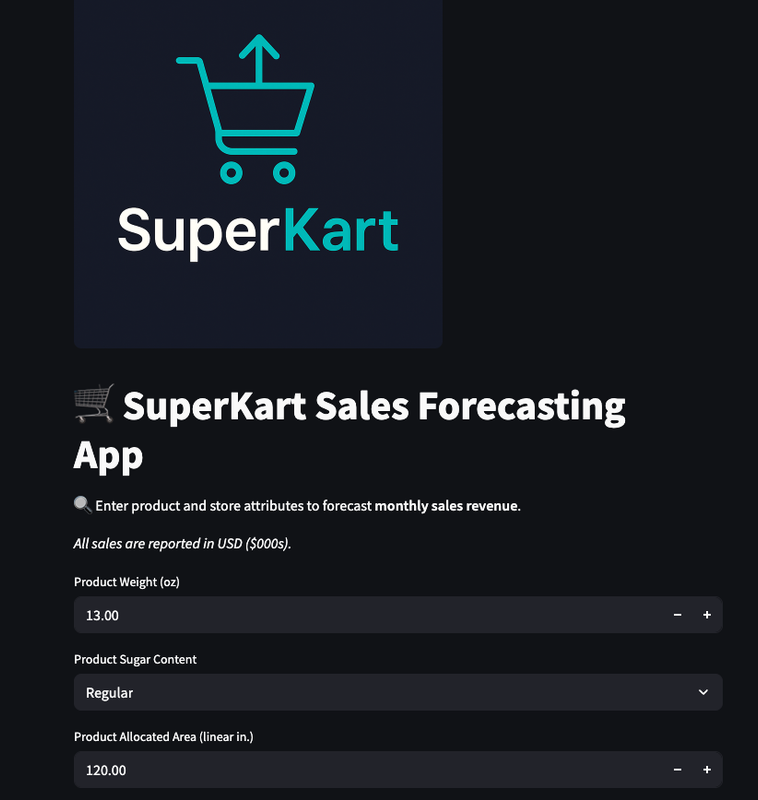

To ensure real-world usability and scalability, the project adopts a decoupled, production-ready architecture:

-

A Streamlit frontend for business users to interact with the model

-

A Flask backend serving the trained model via a RESTful API

-

Docker containers for reproducible deployment

-

Hosting via Hugging Face Spaces, allowing public or internal access

By combining predictive modeling with modern MLOps practices, this solution supports SuperKart's ability to anticipate demand, reduce stockouts, minimize overstocking, and enhance operational decision-making — all while remaining flexible, portable, and ready for scale.

Problem Statement¶

SuperKart, a leading e-commerce company, needs to improve its ability to forecast future sales revenue. Accurate sales predictions are essential for effective inventory management, regional sales planning, and resource allocation.

However, fluctuating demand across regions and inconsistent pipeline visibility pose challenges to reliable forecasting.

Business Context¶

A sales forecast is a prediction of future sales revenue based on historical data, industry trends, and the status of the current sales pipeline. Businesses use the sales forecast to estimate weekly, monthly, quarterly, and annual sales totals. A company needs to make an accurate sales forecast as it adds value across an organization and helps the different verticals to chalk out their future course of action.

Forecasting helps an organization plan its sales operations by region and provides valuable insights to the supply chain team regarding the procurement of goods and materials. An accurate sales forecast process has many benefits which include improved decision-making about the future and reduction of sales pipeline and forecast risks. Moreover, it helps to reduce the time spent in planning territory coverage and establish benchmarks that can be used to assess trends in the future.

Objective¶

SuperKart is a retail chain operating supermarkets and food marts across various tier cities, offering a wide range of products. To optimize its inventory management and make informed decisions around regional sales strategies, SuperKart wants to accurately forecast the sales revenue of its outlets for the upcoming quarter.

To operationalize these insights at scale, the company has partnered with a data science firm—not just to build a predictive model based on historical sales data, but to develop and deploy a robust forecasting solution that can be integrated into SuperKart’s decision-making systems and used across its network of stores.

Data Description¶

The data contains the different attributes of the various products and stores.The detailed data dictionary is given below.

- Product_Id - unique identifier of each product, each identifier having two letters at the beginning followed by a number.

- Product_Weight - weight of each product

- Product_Sugar_Content - sugar content of each product like low sugar, regular and no sugar

- Product_Allocated_Area - ratio of the allocated display area of each product to the total display area of all the products in a store

- Product_Type - broad category for each product like meat, snack foods, hard drinks, dairy, canned, soft drinks, health and hygiene, baking goods, bread, breakfast, frozen foods, fruits and vegetables, household, seafood, starchy foods, others

- Product_MRP - maximum retail price of each product

- Store_Id - unique identifier of each store

- Store_Establishment_Year - year in which the store was established

- Store_Size - size of the store depending on sq. feet like high, medium and low

- Store_Location_City_Type - type of city in which the store is located like Tier 1, Tier 2 and Tier 3. Tier 1 consists of cities where the standard of living is comparatively higher than its Tier 2 and Tier 3 counterparts.

- Store_Type - type of store depending on the products that are being sold there like Departmental Store, Supermarket Type 1, Supermarket Type 2 and Food Mart

- Product_Store_Sales_Total - total revenue generated by the sale of that particular product in that particular store

Installing and Importing the necessary libraries¶

#Installing the libraries with the specified versions

!pip install numpy==2.0.2 pandas==2.2.2 scikit-learn==1.6.1 matplotlib==3.10.0 seaborn==0.13.2 joblib==1.4.2 xgboost==2.1.4 requests==2.32.3 huggingface_hub==0.30.1 -q

Note:

-

After running the above cell, kindly restart the notebook kernel (for Jupyter Notebook) or runtime (for Google Colab) and run all cells sequentially from the next cell.

-

On executing the above line of code, you might see a warning regarding package dependencies. This error message can be ignored as the above code ensures that all necessary libraries and their dependencies are maintained to successfully execute the code in this notebook.

In this section, we import all required Python libraries for building the SuperKart sales forecasting application. These include tools for data manipulation (Pandas, NumPy), visualization (Seaborn, Matplotlib), model development (Scikit-learn, XGBoost), evaluation, serialization, and deployment (Flask, Hugging Face).

import warnings

warnings.filterwarnings("ignore") # Suppress warnings for cleaner output

# -------------------- Data Handling --------------------

import numpy as np # Numerical operations

import pandas as pd # Data manipulation and analysis

# -------------------- Visualization --------------------

import matplotlib.pyplot as plt # Basic plotting

import seaborn as sns # Statistical visualization

# -------------------- Statistics --------------------

from scipy.stats import pearsonr # For calculating Pearson correlation

# -------------------- Model Development --------------------

from sklearn.model_selection import train_test_split # Train-test split

from sklearn.ensemble import RandomForestRegressor # Random Forest model

from xgboost import XGBRegressor # XGBoost model

from sklearn.tree import DecisionTreeRegressor # Decision tree model

# -------------------- Evaluation Metrics --------------------

from sklearn.metrics import (

mean_squared_error, # RMSE

mean_absolute_error, # MAE

r2_score, # R-squared

mean_absolute_percentage_error # MAPE

)

from sklearn import metrics # For scoring in model selection

# -------------------- Preprocessing & Pipeline --------------------

from sklearn.compose import make_column_transformer # Column-wise transformations

from sklearn.pipeline import make_pipeline, Pipeline # Pipeline creation

from sklearn.preprocessing import StandardScaler, OneHotEncoder # Preprocessing tools

# -------------------- Model Tuning --------------------

from sklearn.model_selection import GridSearchCV, RandomizedSearchCV # Hyperparameter tuning

# -------------------- Deployment --------------------

from flask import Flask, request, jsonify # Flask API for backend deployment

import joblib # Model serialization

import os # Directory management

import requests # For frontend-backend API calls

import time # Timing operations

# -------------------- Hugging Face Deployment --------------------

from huggingface_hub import login, HfApi # Hugging Face authentication & uploads

# -------------------- Google Colab Utility --------------------

from google.colab import files # Downloading files from Colab

Connect to Google Drive¶

We mount Google Drive to access and persist project files such as datasets, serialized models, and deployment folders directly from Colab.

# Mount Google Drive to access files stored under 'My Drive'

from google.colab import drive

drive.mount('/content/drive/')

Mounted at /content/drive/

Loading the dataset¶

We begin by loading the raw SuperKart sales dataset and creating a working copy to preserve the original data.

# Load the dataset from Google Drive (adjust path if needed)

data_path = "/content/drive/My Drive/Colab Notebooks/Project 7/SuperKart.csv"

kart_data = pd.read_csv(data_path)

# Create a working copy to preserve the original data

dataset = kart_data.copy()

Data Overview¶

View the first and last 5 rows of the dataset¶

Let's start by examining the structure of our dataset. This helps verify that the data loaded correctly and gives us an initial sense of the features available.

# Display the first 5 rows of the dataset

dataset.head()

| Product_Id | Product_Weight | Product_Sugar_Content | Product_Allocated_Area | Product_Type | Product_MRP | Store_Id | Store_Establishment_Year | Store_Size | Store_Location_City_Type | Store_Type | Product_Store_Sales_Total | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | FD6114 | 12.66 | Low Sugar | 0.027 | Frozen Foods | 117.08 | OUT004 | 2009 | Medium | Tier 2 | Supermarket Type2 | 2842.40 |

| 1 | FD7839 | 16.54 | Low Sugar | 0.144 | Dairy | 171.43 | OUT003 | 1999 | Medium | Tier 1 | Departmental Store | 4830.02 |

| 2 | FD5075 | 14.28 | Regular | 0.031 | Canned | 162.08 | OUT001 | 1987 | High | Tier 2 | Supermarket Type1 | 4130.16 |

| 3 | FD8233 | 12.10 | Low Sugar | 0.112 | Baking Goods | 186.31 | OUT001 | 1987 | High | Tier 2 | Supermarket Type1 | 4132.18 |

| 4 | NC1180 | 9.57 | No Sugar | 0.010 | Health and Hygiene | 123.67 | OUT002 | 1998 | Small | Tier 3 | Food Mart | 2279.36 |

🔎 OBSERVATION:

- There does not seem to be any noteworthy data observations or any missing data in the 1st 5 rows.

Product_Idseems to start with a prefix. I'll review that later as I determine any potential transformation prior to model building.

# Display the last 5 rows of the dataset

dataset.tail()

| Product_Id | Product_Weight | Product_Sugar_Content | Product_Allocated_Area | Product_Type | Product_MRP | Store_Id | Store_Establishment_Year | Store_Size | Store_Location_City_Type | Store_Type | Product_Store_Sales_Total | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 8758 | NC7546 | 14.80 | No Sugar | 0.016 | Health and Hygiene | 140.53 | OUT004 | 2009 | Medium | Tier 2 | Supermarket Type2 | 3806.53 |

| 8759 | NC584 | 14.06 | No Sugar | 0.142 | Household | 144.51 | OUT004 | 2009 | Medium | Tier 2 | Supermarket Type2 | 5020.74 |

| 8760 | NC2471 | 13.48 | No Sugar | 0.017 | Health and Hygiene | 88.58 | OUT001 | 1987 | High | Tier 2 | Supermarket Type1 | 2443.42 |

| 8761 | NC7187 | 13.89 | No Sugar | 0.193 | Household | 168.44 | OUT001 | 1987 | High | Tier 2 | Supermarket Type1 | 4171.82 |

| 8762 | FD306 | 14.73 | Low Sugar | 0.177 | Snack Foods | 224.93 | OUT002 | 1998 | Small | Tier 3 | Food Mart | 2186.08 |

🔎 OBSERVATION:

- There does not seem to be any noteworthy data observations or any missing data in the last 5 rows either.

View the shape of the dataset¶

Check the number of rows and columns to understand the dataset size.

# Print the number of rows and columns in the dataset

print(f"The dataset contains {dataset.shape[0]} rows and {dataset.shape[1]} columns.")

The dataset contains 8763 rows and 12 columns.

Dataset Schema and Null Values¶

# Display column names, data types, and non-null counts

dataset.info()

<class 'pandas.core.frame.DataFrame'> RangeIndex: 8763 entries, 0 to 8762 Data columns (total 12 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 Product_Id 8763 non-null object 1 Product_Weight 8763 non-null float64 2 Product_Sugar_Content 8763 non-null object 3 Product_Allocated_Area 8763 non-null float64 4 Product_Type 8763 non-null object 5 Product_MRP 8763 non-null float64 6 Store_Id 8763 non-null object 7 Store_Establishment_Year 8763 non-null int64 8 Store_Size 8763 non-null object 9 Store_Location_City_Type 8763 non-null object 10 Store_Type 8763 non-null object 11 Product_Store_Sales_Total 8763 non-null float64 dtypes: float64(4), int64(1), object(7) memory usage: 821.7+ KB

# Count total missing values per column

dataset.isnull().sum()

| 0 | |

|---|---|

| Product_Id | 0 |

| Product_Weight | 0 |

| Product_Sugar_Content | 0 |

| Product_Allocated_Area | 0 |

| Product_Type | 0 |

| Product_MRP | 0 |

| Store_Id | 0 |

| Store_Establishment_Year | 0 |

| Store_Size | 0 |

| Store_Location_City_Type | 0 |

| Store_Type | 0 |

| Product_Store_Sales_Total | 0 |

🔎 OBSERVATION:

- There does not seem to be any nulls or missing data in the dataset. There are 8763 non-null values for each data element or feature.

- Product_Sugar_Content, Product_Type, cleaning_fee, Store_Size, Store_Size, Store_Location_City_Type, and Store_Type are categorical-type variables.

- All other variables are numerical in nature.

Check for duplicate data¶

Identify and quantify duplicate records to ensure data quality.

# Check for total number of duplicate rows in the dataset

duplicate_count = dataset.duplicated().sum()

print(f"Number of duplicate rows: {duplicate_count}")

Number of duplicate rows: 0

🔎 OBSERVATION:

- There does not seem to be any duplicated data in the dataset.

Check unique values per column¶

This helps to understand categorical cardinality, identify identifiers, and flag potential constants.

# Count the number of unique values in each column

dataset.nunique()

| 0 | |

|---|---|

| Product_Id | 8763 |

| Product_Weight | 1113 |

| Product_Sugar_Content | 4 |

| Product_Allocated_Area | 228 |

| Product_Type | 16 |

| Product_MRP | 6100 |

| Store_Id | 4 |

| Store_Establishment_Year | 4 |

| Store_Size | 3 |

| Store_Location_City_Type | 3 |

| Store_Type | 4 |

| Product_Store_Sales_Total | 8668 |

# checking unique values for Product_Sugar_Content

dataset['Product_Sugar_Content'].unique()

array(['Low Sugar', 'Regular', 'No Sugar', 'reg'], dtype=object)

🔎 OBSERVATION:

- From the above, I can see there is probably a data labeling error because

Product_Sugar_Contentshows 4 unique values instead of 3 per the data description above. - I am fairly certain The Value

Regularshould be the same asRegwhich has 108 values. - I'll correct this before I begin EDA Analysis.

Data Cleansing for

Product_Sugar_Content Prior to EDA¶

# Standardize inconsistent category labels

print("Before cleanup:", dataset['Product_Sugar_Content'].value_counts(dropna=False))

dataset['Product_Sugar_Content'] = dataset['Product_Sugar_Content'].replace({'Reg': 'Regular', 'reg': 'Regular'})

print("After cleanup:", dataset['Product_Sugar_Content'].value_counts(dropna=False))

Before cleanup: Product_Sugar_Content Low Sugar 4885 Regular 2251 No Sugar 1519 reg 108 Name: count, dtype: int64 After cleanup: Product_Sugar_Content Low Sugar 4885 Regular 2359 No Sugar 1519 Name: count, dtype: int64

🔎 OBSERVATION:

- From the above, I can see I have now normalized the date for

Product_Sugar_Content. ('Reg': 'Regular', 'reg': 'Regular')

Value Counts for Categorical Columns¶

Understanding the distribution of values in categorical features helps detect imbalance and informs your encoding strategy.

# Identify categorical columns based on object dtype

categorical_cols = dataset.select_dtypes(include='object').columns

# Display value counts for each categorical column

for col in categorical_cols:

print(f"\nValue counts for '{col}':\n")

print(dataset[col].value_counts())

Value counts for 'Product_Id':

Product_Id

FD306 1

FD6114 1

FD7839 1

FD5075 1

FD8233 1

..

FD1387 1

FD1231 1

FD5276 1

FD8553 1

FD6027 1

Name: count, Length: 8763, dtype: int64

Value counts for 'Product_Sugar_Content':

Product_Sugar_Content

Low Sugar 4885

Regular 2359

No Sugar 1519

Name: count, dtype: int64

Value counts for 'Product_Type':

Product_Type

Fruits and Vegetables 1249

Snack Foods 1149

Frozen Foods 811

Dairy 796

Household 740

Baking Goods 716

Canned 677

Health and Hygiene 628

Meat 618

Soft Drinks 519

Breads 200

Hard Drinks 186

Others 151

Starchy Foods 141

Breakfast 106

Seafood 76

Name: count, dtype: int64

Value counts for 'Store_Id':

Store_Id

OUT004 4676

OUT001 1586

OUT003 1349

OUT002 1152

Name: count, dtype: int64

Value counts for 'Store_Size':

Store_Size

Medium 6025

High 1586

Small 1152

Name: count, dtype: int64

Value counts for 'Store_Location_City_Type':

Store_Location_City_Type

Tier 2 6262

Tier 1 1349

Tier 3 1152

Name: count, dtype: int64

Value counts for 'Store_Type':

Store_Type

Supermarket Type2 4676

Supermarket Type1 1586

Departmental Store 1349

Food Mart 1152

Name: count, dtype: int64

🔎 OBSERVATION:

- Some of the columns values appear to be slightly imbalanced. I'll have to review distribution further during the EDA process.

Statistical Summary of the Dataset¶

Provides an overview of central tendency, dispersion, and shape of the dataset's distribution.

# Display descriptive statistics for all columns

# .T transposes the table to make it easier to read with column names as row headers.

dataset.describe(include="all").T

| count | unique | top | freq | mean | std | min | 25% | 50% | 75% | max | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Product_Id | 8763 | 8763 | FD306 | 1 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Weight | 8763.0 | NaN | NaN | NaN | 12.653792 | 2.21732 | 4.0 | 11.15 | 12.66 | 14.18 | 22.0 |

| Product_Sugar_Content | 8763 | 3 | Low Sugar | 4885 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Allocated_Area | 8763.0 | NaN | NaN | NaN | 0.068786 | 0.048204 | 0.004 | 0.031 | 0.056 | 0.096 | 0.298 |

| Product_Type | 8763 | 16 | Fruits and Vegetables | 1249 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_MRP | 8763.0 | NaN | NaN | NaN | 147.032539 | 30.69411 | 31.0 | 126.16 | 146.74 | 167.585 | 266.0 |

| Store_Id | 8763 | 4 | OUT004 | 4676 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Establishment_Year | 8763.0 | NaN | NaN | NaN | 2002.032751 | 8.388381 | 1987.0 | 1998.0 | 2009.0 | 2009.0 | 2009.0 |

| Store_Size | 8763 | 3 | Medium | 6025 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Location_City_Type | 8763 | 3 | Tier 2 | 6262 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Type | 8763 | 4 | Supermarket Type2 | 4676 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Store_Sales_Total | 8763.0 | NaN | NaN | NaN | 3464.00364 | 1065.630494 | 33.0 | 2761.715 | 3452.34 | 4145.165 | 8000.0 |

🔎 OBSERVATION:

- There does not seem to be any noteworthy items in the current dataset. Non. numerical columns are showing NaN (Not a Number) for std, mean, min, etc. as expected.

Exploratory Data Analysis (EDA)¶

Purpose:

Define the target variable and select relevant numerical and categorical predictors for the regression model.

We exclude Product_Id and Store_Id since they are unique identifiers that do

not contribute predictive value.

# Define the target variable for prediction

target = 'Product_Store_Sales_Total'

# Manually selected numerical features (excluding identifier columns)

numeric_features = [

'Product_Weight',

'Product_Allocated_Area',

'Product_MRP',

'Store_Establishment_Year' # Will transform to Store_Age later

]

# Manually selected categorical features based on domain knowledge

categorical_features = [

'Product_Sugar_Content',

'Product_Type',

'Store_Size',

'Store_Location_City_Type',

'Store_Type'

]

# List of all numeric columns in the dataset (for quick analysis)

cols_list = dataset.select_dtypes(include=['int64', 'float64']).columns.tolist()

# Print the selected and detected columns for verification

print("All numeric columns detected:", cols_list)

print("-" * 100)

print("Target variable:", target)

print("-" * 100)

print("Manually selected numeric features:", numeric_features)

print("-" * 100)

print("Categorical features:", categorical_features)

All numeric columns detected: ['Product_Weight', 'Product_Allocated_Area', 'Product_MRP', 'Store_Establishment_Year', 'Product_Store_Sales_Total'] ---------------------------------------------------------------------------------------------------- Target variable: Product_Store_Sales_Total ---------------------------------------------------------------------------------------------------- Manually selected numeric features: ['Product_Weight', 'Product_Allocated_Area', 'Product_MRP', 'Store_Establishment_Year'] ---------------------------------------------------------------------------------------------------- Categorical features: ['Product_Sugar_Content', 'Product_Type', 'Store_Size', 'Store_Location_City_Type', 'Store_Type']

Section: Univariate Analysis — Numerical Features¶

Purpose:

Analyze the distribution and presence of outliers in each numerical feature using histograms and boxplots.

# Utility function to plot histogram and boxplot for a given numerical column

def plot_univariate(column_name, df):

"""

Visualizes the distribution and outliers of a numerical column using a histogram and boxplot.

Parameters:

column_name (str): Name of the numerical column to plot

df (pd.DataFrame): DataFrame containing the column

"""

# Safety check for valid column name

if column_name not in df.columns:

print(f"❌ Column '{column_name}' not found in the DataFrame.")

return

# Set up figure layout

plt.figure(figsize=(12, 4))

plt.suptitle(f'Univariate Analysis: {column_name}', fontsize=16, fontweight='bold', y=1.05)

# Plot histogram with kernel density estimate

plt.subplot(1, 2, 1)

sns.histplot(df[column_name], kde=True, bins=30, color='skyblue')

plt.title("Distribution")

plt.xlabel(column_name)

# Plot boxplot to detect outliers

plt.subplot(1, 2, 2)

sns.boxplot(x=df[column_name], color='salmon')

plt.title("Boxplot")

plt.xlabel(column_name)

plt.tight_layout()

plt.show()

Product_Weight - Univariate Analysis¶

Let's begin our univariate analysis by examining the distribution and potential outliers in Product_Weight, one of the key product-level features in the dataset.

# Analyze the distribution and outliers in Product_Weight

plot_univariate('Product_Weight', dataset)

🔎 OBSERVATION:

- The distribution of

Product_Weightappears relatively uniform, with no significant skewness or heavy tails. The boxplot does not indicate the presence of extreme outliers, suggesting this feature is clean and well-behaved for modeling.

Product_Allocated_Area - Univariate Analysis¶

# Analyze the distribution and outliers in Product_Allocated_Area

plot_univariate('Product_Allocated_Area', dataset)

🔎 OBSERVATION:

- The distribution of

Product_Allocated_Areais right-skewed, with most values concentrated near the lower end of the scale. The long tail and presence of outliers beyond the 75th percentile suggest that a small subset of products are allocated significantly larger shelf areas. This may be expected for high-demand or bulkier items, indicating a potential business-driven allocation strategy. These outliers are likely valid and informative rather than erroneous.

Product_MRP - Univivariate Analysis¶

# Analyze the distribution and outliers in Product_MRP

plot_univariate('Product_MRP', dataset)

🔎 OBSERVATION:

- The

Product_MRP(Maximum Retail Price) distribution appears relatively uniform, with no significant skewness. The boxplot reveals no extreme outliers, indicating consistent pricing across the product range.

Store_Establishment_Year - Univivariate Analysis¶

# Analyze the distribution and outliers in Store_Establishment_Year

plot_univariate('Store_Establishment_Year', dataset)

🔎 OBSERVATION:

- The distribution of

Store_Establishment_Yearis left-skewed, with most values concentrated toward more recent years (post-2009). The long tail on the left suggests a few older stores established prior to that time. Given that there are only four unique Store IDs in the dataset, the presence of clustered establishment years may point to one or more high-volume flagship stores, particularly those established around 2009.

Section: Univariate Analysis — Categorical Features¶

Purpose:

To understand the distribution of each categorical variable. This helps identify potential class imbalance, which could impact model training and encoding strategies. We'll use countplot for visualization and display frequency counts.

# Define a utility function to graph categorical features using countplots

def plot_categorical_eda(data, col):

"""

Displays the distribution of a categorical feature using a countplot,

and prints the frequency of each category.

Parameters:

- data (pd.DataFrame): The dataset

- col (str): Column name of the categorical feature

"""

plt.figure(figsize=(10, 5))

# Plot the count of each category

sns.countplot(data=data, x=col, order=data[col].value_counts().index)

plt.title(f"Category Counts for '{col}'", fontsize=14)

plt.xlabel(col)

plt.ylabel("Count")

plt.xticks(rotation=45)

plt.tight_layout()

plt.show()

# Print raw value counts

print(f"\nValue Counts for '{col}':")

print(data[col].value_counts())

Product_Sugar_Content¶

# plot 'Product_Sugar_Content':

plot_categorical_eda(dataset, 'Product_Sugar_Content')

Value Counts for 'Product_Sugar_Content': Product_Sugar_Content Low Sugar 4885 Regular 2359 No Sugar 1519 Name: count, dtype: int64

🔎 OBSERVATION:

- The majority of products fall under the 'Low Sugar' category, with 'Regular' and 'No Sugar' appearing much less frequently. The distribution appears clean and normalized, with no anomalies or unexpected values.

Product_Type - Univariate Analysis¶

# plot 'Product_Type':

plot_categorical_eda(dataset, 'Product_Type')

Value Counts for 'Product_Type': Product_Type Fruits and Vegetables 1249 Snack Foods 1149 Frozen Foods 811 Dairy 796 Household 740 Baking Goods 716 Canned 677 Health and Hygiene 628 Meat 618 Soft Drinks 519 Breads 200 Hard Drinks 186 Others 151 Starchy Foods 141 Breakfast 106 Seafood 76 Name: count, dtype: int64

🔎 OBSERVATION:

Top categories:

- Fruits and Vegetables, Snack Foods, and Frozen Foods have the highest counts, suggesting they are SuperKart's most common product categories.

Bottom categories:

- Seafood, Breakfast, and Starchy Foods are less common, possibly due to limited demand or shelf life.

The distribution appears reasonably balanced across most categories, but the top few dominate the inventory.

Store_Size - Univariate Analysis¶

# plot 'Store_Size':

plot_categorical_eda(dataset, 'Store_Size')

Value Counts for 'Store_Size': Store_Size Medium 6025 High 1586 Small 1152 Name: count, dtype: int64

🔎 OBSERVATION:

-

Medium stores are by far the most common, with 6,025 entries.

-

High stores are less frequent at 1,586 entries.

-

Small stores are the least represented with 1,152 entries.

-

This imbalance may reflect SuperKart's business model — possibly a greater reliance on medium-sized store formats. It's not a data issue, but it's worth considering for possible class imbalance for modeling.

Store_Location_City_Type - Univariate Analysis¶

# plot 'Store_Location_City_Type':

plot_categorical_eda(dataset, 'Store_Location_City_Type')

Value Counts for 'Store_Location_City_Type': Store_Location_City_Type Tier 2 6262 Tier 1 1349 Tier 3 1152 Name: count, dtype: int64

🔎 OBSERVATION:

-

The majority of stores are located in Tier 2 cities, with 6262 entries.

-

Tier 1 and Tier 3 cities are much less represented, with only 1349 and 1152 entries respectively.

This suggests SuperKart's operations are heavily concentrated in mid-sized urban centers (Tier 2).

Store_Type - Univariate Analysis¶

# plot 'Store_Type':

plot_categorical_eda(dataset, 'Store_Type')

Value Counts for 'Store_Type': Store_Type Supermarket Type2 4676 Supermarket Type1 1586 Departmental Store 1349 Food Mart 1152 Name: count, dtype: int64

🔎 OBSERVATION:

The majority of stores are Supermarket Type2 (4676 records), followed by:

-

Supermarket Type1 (1586)

-

Departmental Store (1349)

-

Food Mart (1152)

This distribution shows a class imbalance, which could influence model behavior if this variable has predictive power.

Section: Bivariate Analysis¶

Let's start with a correlation heatmap matrix

# Print correlation matrix re-ordered to show correlation to the target variable `Product_Store_Sales_Total`

target = 'Product_Store_Sales_Total'

corr_matrix = dataset[cols_list].corr()

# Sort by correlation with the target

sorted_corr = corr_matrix[[target]].sort_values(by=target, ascending=False)

# Visualize only top N correlated features

top_features = sorted_corr.index.tolist()

plt.figure(figsize=(10, 6))

sns.heatmap(

corr_matrix.loc[top_features, top_features],

annot=True, fmt=".2f", cmap="Blues", vmin=-1, vmax=1, linewidths=0.5, linecolor="gray"

)

plt.title("Correlation Matrix (Sorted by Target)")

plt.show()

🔎 OBSERVATION:

The correlation matrix reveals two features with strong positive correlations to our target variable Product_Store_Sales_Total:

-

Product_MRP (correlation ≈ 0.79): This is expected — products with higher maximum retail prices will naturally generate higher total sales, assuming similar sales volumes.

-

Product_Weight (correlation ≈ 0.74): Heavier products may represent bulkier or premium items that command higher prices, which could explain their strong correlation with total sales.

Interestingly, Product_Allocated_Area has almost no correlation with total sales. This suggests that floor space allocation alone isn't a reliable predictor of sales performance.

Visualizing Relationships Between Numeric Features and Sales¶

Purpose:

To better understand which numerical features drive product-store sales, we'll use scatterplots comparing each numeric variable to the target Product_Store_Sales_Total.

List of numerical features in the dataset (excluding ID columns)¶

'Product_Weight', # Weight of the product

'Product_Allocated_Area', # Shelf space allocated in the store

'Product_MRP', # Maximum Retail Price

'Store_Establishment_Year' # Year the store was established# Scatter plots of numeric features vs target

# Let's start with defining a utility function

def plot_scatter_vs_target(df, feature, target='Product_Store_Sales_Total'):

"""

Generate a scatter plot between a numeric feature and the target variable,

including the Pearson correlation coefficient in the title.

Parameters:

df (DataFrame): The dataset

feature (str): The numeric column to plot against the target

target (str): The target variable (default is 'Product_Store_Sales_Total')

"""

# Drop NA values to avoid issues with correlation

plot_data = df[[feature, target]].dropna()

# Calculate Pearson correlation

corr_coef, _ = pearsonr(plot_data[feature], plot_data[target])

# Plot

plt.figure(figsize=(6, 4))

sns.scatterplot(data=plot_data, x=feature, y=target, alpha=0.6)

plt.title(f'{feature} vs {target}\n(Pearson r = {corr_coef:.2f})')

plt.xlabel(feature)

plt.ylabel(target)

plt.grid(True)

plt.tight_layout()

plt.show()

# Display scatter plot of our target against `Product_Weight`

plot_scatter_vs_target(dataset, 'Product_Weight')

🔎 OBSERVATION:

Product_Weight has a strong positive linear correlation with Product_Store_Sales_Total (r ≈ 0.74). This suggests that heavier products tend to generate higher sales — potentially due to their larger size, premium packaging, or greater volume, which could command higher retail prices.

# Display scatter plot of our target against `Product_Allocated_Area`

plot_scatter_vs_target(dataset, 'Product_Allocated_Area')

🔎 OBSERVATION:

- Based on the scatterplot above we see a low correlation with

Product_Allocated_Area. - The Pearson coefficien is 0.00 but this may be due to skewed data with lots of outliers as we saw in the boxplot higher up in the notebook.

# Display scatter plot of our target against `Product_MRP`

plot_scatter_vs_target(dataset, 'Product_MRP')

🔎 OBSERVATION:

-

Product_MRP shows a strong positive correlation with Product_Store_Sales_Total (Pearson r ≈ 0.76). This is expected — as the unit price of a product increases, total revenue also increases assuming consistent or growing sales volume.

-

The upward trend is quite clear, suggesting that pricing is a dominant driver of total sales. This makes Product_MRP a key feature in the model and a strong predictor of revenue.

# Display scatter plot of our target against `Store_Establishment_Year`

plot_scatter_vs_target(dataset, 'Store_Establishment_Year')

🔎 OBSERVATION:

-

There appears to be no strong linear relationship between Store_Establishment_Year and Product_Store_Sales_Total (Pearson r = -0.19). The data points are spread out with no clear trend. This suggests that the age of the store, by itself, is not a major driver of monthly product-store sales.

-

It's possible that newer and older stores both perform well depending on location, product mix, and store type — all of which may matter more than the year the store was founded.

Checking the distribution of our target variable against categorical variables for additional insight.¶

- Visualize how the average monthly sales vary across different categories. We'll use boxplots and barplots to compare mean sales for each category in:

List of Categorical features in the dataset (excluding ID columns)¶

'Product_Sugar_Content', # sugar content of each product like low sugar, regular and no sugar

'Product_Type', # broad category for each product like meat, snack foods

'Store_Size', # size of the store depending on sq. feet like high, medium and low

'Store_Location_City_Type', # type of city in which the store is located like Tier 1, Tier 2

'Store_Type' # type of store depending on the products that are being sold there like Departmental Store, Supermarket Type 1Define a utility function for our Boxplots and Barplots for our categorical features¶

# Define a utility function to graph categorical values using barplots.

def plot_categorical_insights(df, cat_col, target='Product_Store_Sales_Total'):

"""

Display a boxplot followed by a barplot for a categorical feature against the target variable.

Parameters:

- df (DataFrame): The dataset

- cat_col (str): The name of the categorical column

- target (str): The target variable to analyze

"""

# Barplot (Mean Aggregation)

plt.figure(figsize=(10, 5))

mean_values = df.groupby(cat_col)[target].mean().sort_values(ascending=False)

sns.barplot(x=mean_values.index, y=mean_values.values)

plt.title(f'Average {target} by {cat_col} (Barplot)')

plt.ylabel(f'Mean {target}')

plt.xticks(rotation=45)

plt.tight_layout()

plt.show()

# Spacer

print("\n") # adds a blank line in output

# Boxplot

plt.figure(figsize=(10, 5))

sns.boxplot(x=cat_col, y=target, data=df)

plt.title(f'Distribution of {target} by {cat_col} (Boxplot)')

plt.xticks(rotation=45)

plt.tight_layout()

plt.show()

- Display Which Store Types Drive the Highest Sales Revenue?¶

# Display barplot and boxplot of our target against `Store_Type`

plot_categorical_insights(dataset, 'Store_Type')

🔎 OBSERVATION:

- Based on the above barplot we can see Department stores generate the most revenue and Food Marts generate the least. The Food Mart makes sense as this is probably a much smaller store relative to the others.

- Based on the Boxplot we can see that the Department stores mean store sales about 20% higher than it's next store type which is Supermarket Type1.

Store_Typecould be a strong feature in our model due to its clear impact on sales

- Display Which Store Sizes Drive the Highest Sales Revenue?¶

# Display barplot and boxplot of our target against `Store_Size`

plot_categorical_insights(dataset, 'Store_Size')

🔎 OBSERVATION:

- Based on the above barplot we can see larger stores generate the most revenue and smaller store types generate the least. This makes sense.

Store_Sizecould be a strong feature in our model due to its clear impact on sales

- Display Which Store Location City Types Drive the Highest Sales Revenue?¶

# Display barplot and boxplot of our target against `Store_Location_City_Type`

plot_categorical_insights(dataset, 'Store_Location_City_Type')

🔎 OBSERVATION:

- Based on the above barplot we can see Tier 1 locations generate the most revenue and Tier 3 generate the least. This makes sense as Tier 1 locations may be larger urban atreas and contain a larger selection and volume of products.

Store_Location_City_Typecould be a strong feature in our model due to its clear impact on sales

- Display Which Product Types Drive the Highest Sales Revenue?¶

# Display barplot and boxplot of our target against `Product_Type`

plot_categorical_insights(dataset, 'Product_Type')

🔎 OBSERVATION:

- Interestingly, based on the above barplot and boxplots, most products are fairly evenly distributed and contribute fairly equally to overall total store sales, although Stachy Foods is slightly higher than the rest.

Product_Typealone, may not be a strong feature in our model.

- Display Whether

Product_Sugar_Content Drives Sales Revenue?¶

# Display barplot and boxplot of our target against `Product_Sugar_Content`

plot_categorical_insights(dataset, 'Product_Sugar_Content')

🔎 OBSERVATION:

- Similar to

Product_Typebased on the above barplot and boxplots, the categories are fairly evenly distributed and contribute fairly equally to overall total store sales. Product_Sugar_Contentmay not be a strong feature in our model.

- Display Which Store Drives the Most Sales Revenue?¶

# Display barplot and boxplot of our target against `Store_Id`

plot_categorical_insights(dataset, 'Store_Id')

🔎 OBSERVATION:

- Based on the above Barplot and Boxplots, we can see Store ID OUT003 drives the most revenue, followed by OUT001, OUT004, and finally OUT002.

- Let's view the details of each store sorted by average total sales by store?¶

# Group, aggregate, and sort by total revenue

store_avg_info = (

dataset.groupby('Store_Id')[['Store_Size', 'Store_Type', 'Product_Store_Sales_Total']]

.agg({

'Store_Size': 'first',

'Store_Type': 'first',

'Product_Store_Sales_Total': 'mean'

})

.reset_index()

.sort_values(by='Product_Store_Sales_Total', ascending=False)

)

# Display the DataFrame in a clean tabular format

# Format large numbers with commas

store_avg_info['Product_Store_Sales_Total'] = store_avg_info['Product_Store_Sales_Total'].apply(lambda x: f"{x:,.0f}")

# Show the DataFrame

from IPython.display import display

display(store_avg_info)

| Store_Id | Store_Size | Store_Type | Product_Store_Sales_Total | |

|---|---|---|---|---|

| 2 | OUT003 | Medium | Departmental Store | 4,947 |

| 0 | OUT001 | High | Supermarket Type1 | 3,924 |

| 3 | OUT004 | Medium | Supermarket Type2 | 3,299 |

| 1 | OUT002 | Small | Food Mart | 1,763 |

🔎 OBSERVATION:

- Based on the above table, we can see Store ID OUT003 has the highest average sales by store.

- Let's view the total sales revenue by Product Type per Store¶

# Generate table displaying total sales revenue by Product Type per Store

pivot_sales_revenue = dataset.pivot_table(

index='Product_Type',

columns='Store_Id',

values='Product_Store_Sales_Total',

aggfunc='sum',

fill_value=0

)

pivot_sales_revenue = pivot_sales_revenue.sort_values(by=pivot_sales_revenue.columns.tolist(), ascending=False)

display(pivot_sales_revenue)

| Store_Id | OUT001 | OUT002 | OUT003 | OUT004 |

|---|---|---|---|---|

| Product_Type | ||||

| Snack Foods | 806142.24 | 255317.57 | 918510.44 | 2009026.70 |

| Fruits and Vegetables | 792992.59 | 298503.56 | 897437.46 | 2311899.66 |

| Dairy | 598767.62 | 178888.18 | 715814.94 | 1318447.30 |

| Frozen Foods | 558556.81 | 180295.95 | 597608.42 | 1473519.65 |

| Household | 531371.38 | 184665.65 | 523981.64 | 1324721.50 |

| Baking Goods | 525131.04 | 169860.50 | 491908.20 | 1266086.26 |

| Meat | 505867.28 | 151800.01 | 520939.68 | 950604.97 |

| Canned | 449016.38 | 151467.66 | 452445.17 | 1247153.50 |

| Health and Hygiene | 435005.31 | 164660.81 | 439139.18 | 1124901.91 |

| Soft Drinks | 410548.69 | 103808.35 | 365046.30 | 917641.38 |

| Hard Drinks | 152920.74 | 54281.85 | 110760.30 | 307851.73 |

| Others | 123977.09 | 32835.73 | 159963.75 | 224719.73 |

| Breads | 121274.09 | 43419.47 | 175391.93 | 374856.75 |

| Starchy Foods | 120443.98 | 20044.98 | 143538.60 | 234746.89 |

| Seafood | 52936.84 | 17663.35 | 65337.48 | 136466.37 |

| Breakfast | 38161.10 | 23396.10 | 95634.08 | 204939.13 |

🔎 OBSERVATION:

- Based on the above table, we can see the sum of total sales for each product type by store.

- I don't see any anomalous items in the date above.

- Let's view the same data in a Heatmap¶

# Generate heatmap displaying total sales revenue by Product Type per Store

# Pivot table: sum of sales by Product_Type and Store_Id

pivot_table = dataset.pivot_table(

index='Product_Type',

columns='Store_Id',

values='Product_Store_Sales_Total',

aggfunc='sum',

fill_value=0

)

# Plot the heatmap

plt.figure(figsize=(14, 10))

sns.heatmap(pivot_table, annot=True, fmt=".0f", cmap="YlGnBu", linewidths=0.5, linecolor='gray')

plt.title("Heatmap of Total Sales by Product Type and Store")

plt.ylabel("Product Type")

plt.xlabel("Store ID")

plt.xticks(rotation=45)

plt.tight_layout()

plt.show()

🔎 OBSERVATION:

- From the heatmap above, we can see that store OUT004 has high sales for Snack Foods, Fruits and Vegetables, and Frozen Foods.

- I don't see any other anomalous data

Let's do a deeper analysis of each store¶

Store OUT001¶

# Define a utility function to output each store's data.

# Create barplot of Total Sales by Product Type

def explore_store(store_id):

store_data = dataset[dataset["Store_Id"] == store_id]

print(f"🔍 Summary for Store: {store_id}") # Print the overall summary table for store

display(store_data.describe(include="all").T)

print("-" * 100) # Print a separator line between tables

print("\n📊 Product Type Distribution:") # Print the overall store distribution by Product Type

display(store_data["Product_Type"].value_counts())

print("-" * 100) # Print a separator line between tables

print("\n📈 Revenue Distribution by Product Type:") # Print the overall revenue distribution by Product Type

display(store_data.groupby("Product_Type")["Product_Store_Sales_Total"].sum().sort_values(ascending=False))

print("-" * 100) # Print a separator line between tables

# Barplot of sales per product type

plt.figure(figsize=(10, 4))

sns.barplot(

data=store_data,

y="Product_Type",

x="Product_Store_Sales_Total",

estimator=sum,

errorbar=None

)

plt.title(f"Total Sales by Product Type for {store_id}")

plt.tight_layout()

plt.show()

# Call the above function for Store OUT001

explore_store("OUT001")

🔍 Summary for Store: OUT001

| count | unique | top | freq | mean | std | min | 25% | 50% | 75% | max | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Product_Id | 1586 | 1586 | NC7187 | 1 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Weight | 1586.0 | NaN | NaN | NaN | 13.458865 | 2.064975 | 6.16 | 12.0525 | 13.96 | 14.95 | 17.97 |

| Product_Sugar_Content | 1586 | 3 | Low Sugar | 845 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Allocated_Area | 1586.0 | NaN | NaN | NaN | 0.068768 | 0.047131 | 0.004 | 0.033 | 0.0565 | 0.094 | 0.295 |

| Product_Type | 1586 | 16 | Snack Foods | 202 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_MRP | 1586.0 | NaN | NaN | NaN | 160.514054 | 30.359059 | 71.35 | 141.72 | 168.32 | 182.9375 | 226.59 |

| Store_Id | 1586 | 1 | OUT001 | 1586 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Establishment_Year | 1586.0 | NaN | NaN | NaN | 1987.0 | 0.0 | 1987.0 | 1987.0 | 1987.0 | 1987.0 | 1987.0 |

| Store_Size | 1586 | 1 | High | 1586 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Location_City_Type | 1586 | 1 | Tier 2 | 1586 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Type | 1586 | 1 | Supermarket Type1 | 1586 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Store_Sales_Total | 1586.0 | NaN | NaN | NaN | 3923.778802 | 904.62901 | 2300.56 | 3285.51 | 4139.645 | 4639.4 | 4997.63 |

---------------------------------------------------------------------------------------------------- 📊 Product Type Distribution:

| count | |

|---|---|

| Product_Type | |

| Snack Foods | 202 |

| Fruits and Vegetables | 199 |

| Dairy | 150 |

| Frozen Foods | 142 |

| Baking Goods | 136 |

| Household | 134 |

| Meat | 130 |

| Canned | 119 |

| Health and Hygiene | 114 |

| Soft Drinks | 106 |

| Hard Drinks | 38 |

| Starchy Foods | 32 |

| Others | 31 |

| Breads | 30 |

| Seafood | 13 |

| Breakfast | 10 |

---------------------------------------------------------------------------------------------------- 📈 Revenue Distribution by Product Type:

| Product_Store_Sales_Total | |

|---|---|

| Product_Type | |

| Snack Foods | 806142.24 |

| Fruits and Vegetables | 792992.59 |

| Dairy | 598767.62 |

| Frozen Foods | 558556.81 |

| Household | 531371.38 |

| Baking Goods | 525131.04 |

| Meat | 505867.28 |

| Canned | 449016.38 |

| Health and Hygiene | 435005.31 |

| Soft Drinks | 410548.69 |

| Hard Drinks | 152920.74 |

| Others | 123977.09 |

| Breads | 121274.09 |

| Starchy Foods | 120443.98 |

| Seafood | 52936.84 |

| Breakfast | 38161.10 |

----------------------------------------------------------------------------------------------------

🔎 OBSERVATION:

- We can see from the data above, Store OUT001 is a Supermarket Type1 store.

- It's in a Tier 2 city location.

- It was established in 1987.

- It's a store size of type High.

- It earns most of it's revenue from snack foods, followed by Fruits and Vegetables

- It earns the least on Breakfast Foods.

Store OUT002¶

# Call the above function for Store OUT002

explore_store("OUT002")

🔍 Summary for Store: OUT002

| count | unique | top | freq | mean | std | min | 25% | 50% | 75% | max | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Product_Id | 1152 | 1152 | NC2769 | 1 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Weight | 1152.0 | NaN | NaN | NaN | 9.911241 | 1.799846 | 4.0 | 8.7675 | 9.795 | 10.89 | 19.82 |

| Product_Sugar_Content | 1152 | 3 | Low Sugar | 658 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Allocated_Area | 1152.0 | NaN | NaN | NaN | 0.067747 | 0.047567 | 0.006 | 0.031 | 0.0545 | 0.09525 | 0.292 |

| Product_Type | 1152 | 16 | Fruits and Vegetables | 168 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_MRP | 1152.0 | NaN | NaN | NaN | 107.080634 | 24.912333 | 31.0 | 92.8275 | 104.675 | 117.8175 | 224.93 |

| Store_Id | 1152 | 1 | OUT002 | 1152 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Establishment_Year | 1152.0 | NaN | NaN | NaN | 1998.0 | 0.0 | 1998.0 | 1998.0 | 1998.0 | 1998.0 | 1998.0 |

| Store_Size | 1152 | 1 | Small | 1152 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Location_City_Type | 1152 | 1 | Tier 3 | 1152 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Type | 1152 | 1 | Food Mart | 1152 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Store_Sales_Total | 1152.0 | NaN | NaN | NaN | 1762.942465 | 462.862431 | 33.0 | 1495.4725 | 1889.495 | 2133.6225 | 2299.63 |

---------------------------------------------------------------------------------------------------- 📊 Product Type Distribution:

| count | |

|---|---|

| Product_Type | |

| Fruits and Vegetables | 168 |

| Snack Foods | 146 |

| Dairy | 104 |

| Frozen Foods | 101 |

| Household | 100 |

| Baking Goods | 96 |

| Health and Hygiene | 91 |

| Canned | 88 |

| Meat | 87 |

| Soft Drinks | 62 |

| Hard Drinks | 30 |

| Breads | 23 |

| Others | 19 |

| Breakfast | 15 |

| Starchy Foods | 12 |

| Seafood | 10 |

---------------------------------------------------------------------------------------------------- 📈 Revenue Distribution by Product Type:

| Product_Store_Sales_Total | |

|---|---|

| Product_Type | |

| Fruits and Vegetables | 298503.56 |

| Snack Foods | 255317.57 |

| Household | 184665.65 |

| Frozen Foods | 180295.95 |

| Dairy | 178888.18 |

| Baking Goods | 169860.50 |

| Health and Hygiene | 164660.81 |

| Meat | 151800.01 |

| Canned | 151467.66 |

| Soft Drinks | 103808.35 |

| Hard Drinks | 54281.85 |

| Breads | 43419.47 |

| Others | 32835.73 |

| Breakfast | 23396.10 |

| Starchy Foods | 20044.98 |

| Seafood | 17663.35 |

----------------------------------------------------------------------------------------------------

🔎 OBSERVATION:

- We can see from the data above, Store OUT002 is a Food Mart.

- It's in a Tier 3 city location.

- It was established in 1998.

- It's a store size of type Small.

- It earns most of it's revenue from Fruits and Vegetables followed by Snack Foods

- It earns the least on Seafood.

Store OUT003¶

# Call the above function for Store OUT003

explore_store("OUT003")

🔍 Summary for Store: OUT003

| count | unique | top | freq | mean | std | min | 25% | 50% | 75% | max | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Product_Id | 1349 | 1349 | NC522 | 1 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Weight | 1349.0 | NaN | NaN | NaN | 15.103692 | 1.893531 | 7.35 | 14.02 | 15.18 | 16.35 | 22.0 |

| Product_Sugar_Content | 1349 | 3 | Low Sugar | 750 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Allocated_Area | 1349.0 | NaN | NaN | NaN | 0.068637 | 0.048708 | 0.004 | 0.031 | 0.057 | 0.094 | 0.298 |

| Product_Type | 1349 | 16 | Snack Foods | 186 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_MRP | 1349.0 | NaN | NaN | NaN | 181.358725 | 24.796429 | 85.88 | 166.92 | 179.67 | 198.07 | 266.0 |

| Store_Id | 1349 | 1 | OUT003 | 1349 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Establishment_Year | 1349.0 | NaN | NaN | NaN | 1999.0 | 0.0 | 1999.0 | 1999.0 | 1999.0 | 1999.0 | 1999.0 |

| Store_Size | 1349 | 1 | Medium | 1349 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Location_City_Type | 1349 | 1 | Tier 1 | 1349 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Type | 1349 | 1 | Departmental Store | 1349 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Store_Sales_Total | 1349.0 | NaN | NaN | NaN | 4946.966323 | 677.539953 | 3069.24 | 4355.39 | 4958.29 | 5366.59 | 8000.0 |

---------------------------------------------------------------------------------------------------- 📊 Product Type Distribution:

| count | |

|---|---|

| Product_Type | |

| Snack Foods | 186 |

| Fruits and Vegetables | 182 |

| Dairy | 145 |

| Frozen Foods | 122 |

| Household | 107 |

| Meat | 106 |

| Baking Goods | 99 |

| Canned | 90 |

| Health and Hygiene | 89 |

| Soft Drinks | 74 |

| Breads | 34 |

| Others | 32 |

| Starchy Foods | 28 |

| Hard Drinks | 23 |

| Breakfast | 19 |

| Seafood | 13 |

---------------------------------------------------------------------------------------------------- 📈 Revenue Distribution by Product Type:

| Product_Store_Sales_Total | |

|---|---|

| Product_Type | |

| Snack Foods | 918510.44 |

| Fruits and Vegetables | 897437.46 |

| Dairy | 715814.94 |

| Frozen Foods | 597608.42 |

| Household | 523981.64 |

| Meat | 520939.68 |

| Baking Goods | 491908.20 |

| Canned | 452445.17 |

| Health and Hygiene | 439139.18 |

| Soft Drinks | 365046.30 |

| Breads | 175391.93 |

| Others | 159963.75 |

| Starchy Foods | 143538.60 |

| Hard Drinks | 110760.30 |

| Breakfast | 95634.08 |

| Seafood | 65337.48 |

----------------------------------------------------------------------------------------------------

🔎 OBSERVATION:

- We can see from the data above, Store OUT003 is a Department Store.

- It's in a Tier 1 city location.

- It was established in 1999.

- It's a store size of type Medium.

- It earns most of it's revenue from Snack Foods followed by Fruits and Vegetables similar to Store OUT001

- It also earns the least on Seafood.

Store OUT004¶

# Call the above function for Store OUT004

explore_store("OUT004")

🔍 Summary for Store: OUT004

| count | unique | top | freq | mean | std | min | 25% | 50% | 75% | max | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Product_Id | 4676 | 4676 | NC584 | 1 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Weight | 4676.0 | NaN | NaN | NaN | 12.349613 | 1.428199 | 7.34 | 11.37 | 12.37 | 13.3025 | 17.79 |

| Product_Sugar_Content | 4676 | 3 | Low Sugar | 2632 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Allocated_Area | 4676.0 | NaN | NaN | NaN | 0.069092 | 0.048584 | 0.004 | 0.031 | 0.056 | 0.097 | 0.297 |

| Product_Type | 4676 | 16 | Fruits and Vegetables | 700 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_MRP | 4676.0 | NaN | NaN | NaN | 142.399709 | 17.513973 | 83.04 | 130.54 | 142.82 | 154.1925 | 197.66 |

| Store_Id | 4676 | 1 | OUT004 | 4676 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Establishment_Year | 4676.0 | NaN | NaN | NaN | 2009.0 | 0.0 | 2009.0 | 2009.0 | 2009.0 | 2009.0 | 2009.0 |

| Store_Size | 4676 | 1 | Medium | 4676 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Location_City_Type | 4676 | 1 | Tier 2 | 4676 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Store_Type | 4676 | 1 | Supermarket Type2 | 4676 | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| Product_Store_Sales_Total | 4676.0 | NaN | NaN | NaN | 3299.312111 | 468.271692 | 1561.06 | 2942.085 | 3304.18 | 3646.9075 | 5462.86 |

---------------------------------------------------------------------------------------------------- 📊 Product Type Distribution:

| count | |

|---|---|

| Product_Type | |

| Fruits and Vegetables | 700 |

| Snack Foods | 615 |

| Frozen Foods | 446 |

| Household | 399 |

| Dairy | 397 |

| Baking Goods | 385 |

| Canned | 380 |

| Health and Hygiene | 334 |

| Meat | 295 |

| Soft Drinks | 277 |

| Breads | 113 |

| Hard Drinks | 95 |

| Starchy Foods | 69 |

| Others | 69 |

| Breakfast | 62 |

| Seafood | 40 |

---------------------------------------------------------------------------------------------------- 📈 Revenue Distribution by Product Type:

| Product_Store_Sales_Total | |

|---|---|

| Product_Type | |

| Fruits and Vegetables | 2311899.66 |

| Snack Foods | 2009026.70 |

| Frozen Foods | 1473519.65 |

| Household | 1324721.50 |

| Dairy | 1318447.30 |

| Baking Goods | 1266086.26 |

| Canned | 1247153.50 |

| Health and Hygiene | 1124901.91 |

| Meat | 950604.97 |

| Soft Drinks | 917641.38 |

| Breads | 374856.75 |

| Hard Drinks | 307851.73 |

| Starchy Foods | 234746.89 |

| Others | 224719.73 |

| Breakfast | 204939.13 |

| Seafood | 136466.37 |

----------------------------------------------------------------------------------------------------

🔎 OBSERVATION:

- We can see from the data above, Store OUT004 is a Supermarket Type2 Store.

- It's in a Tier 2 city location.

- It was established in 2009.

- It's a store size of type Medium.

- It earns most of it's revenue from Fruits and Vegetables followed by Snack Foods similar to Store OUT002

- It also earns the least on Seafood.

Final Data Checks¶

- class imbalance

- outliers

- missing value validation again

- skewness

1. Check for Class Imbalance (Categorical Features)¶

# Loop through all selected categorical features

# and print the percentage distribution of each category

for col in categorical_features:

print(f"\nColumn: {col}")

print(dataset[col].value_counts(normalize=True).round(3) * 100)

Column: Product_Sugar_Content Product_Sugar_Content Low Sugar 55.7 Regular 26.9 No Sugar 17.3 Name: proportion, dtype: float64 Column: Product_Type Product_Type Fruits and Vegetables 14.3 Snack Foods 13.1 Frozen Foods 9.3 Dairy 9.1 Household 8.4 Baking Goods 8.2 Canned 7.7 Health and Hygiene 7.2 Meat 7.1 Soft Drinks 5.9 Breads 2.3 Hard Drinks 2.1 Others 1.7 Starchy Foods 1.6 Breakfast 1.2 Seafood 0.9 Name: proportion, dtype: float64 Column: Store_Size Store_Size Medium 68.8 High 18.1 Small 13.1 Name: proportion, dtype: float64 Column: Store_Location_City_Type Store_Location_City_Type Tier 2 71.5 Tier 1 15.4 Tier 3 13.1 Name: proportion, dtype: float64 Column: Store_Type Store_Type Supermarket Type2 53.4 Supermarket Type1 18.1 Departmental Store 15.4 Food Mart 13.1 Name: proportion, dtype: float64

🔎 OBSERVATION:

-

While some categorical features such as Product_Sugar_Content, Store_Size, and Store_Location_City_Type exhibit moderate class imbalance, none are extreme enough to warrant resampling or weighting adjustments in the modeling phase.

-

I believe this distribution is acceptable for model training without additional correction.

2. Check for Outliers (Numerical Features)¶

# Detect outliers using the IQR method for all numeric features

for col in numeric_features:

# Calculate Q1 (25th percentile) and Q3 (75th percentile)

q1 = dataset[col].quantile(0.25)

q3 = dataset[col].quantile(0.75)

iqr = q3 - q1

# Identify data points that fall outside 1.5 * IQR

outliers = dataset[(dataset[col] < q1 - 1.5 * iqr) | (dataset[col] > q3 + 1.5 * iqr)]

# Print count of outliers

print(f"{col}: {len(outliers)} outliers")

Product_Weight: 54 outliers Product_Allocated_Area: 104 outliers Product_MRP: 57 outliers Store_Establishment_Year: 0 outliers

🔎 OBSERVATION:

- Outliers were detected in several numerical features using the IQR method. However, after examining their frequency and context during univariate analysis, they appear to reflect valid business scenarios (e.g., high Product_MRP or larger product weights). Therefore, I opted not to apply capping or transformation, as these values are likely informative for sales prediction rather than errors.

3. Re-Check for any missing data¶

# Re-check and display only columns with missing values (if any)

missing_data = dataset.isnull().sum()

missing_data = missing_data[missing_data > 0]

print(missing_data if not missing_data.empty else "No missing data found.")

No missing data found.

🔎 OBSERVATION:

- No missing values were introduced or overlooked during Univariate or Bivariate EDA. The dataset remains clean and fully populated, confirming no imputation is required before modeling.

4. Skewness Check for Target and Predictors¶

# Check skewness for numeric features and target

# Values > 1 or < -1 are considered highly skewed

dataset[numeric_features + [target]].skew().sort_values(ascending=False)

| 0 | |

|---|---|

| Product_Allocated_Area | 1.128093 |

| Product_Store_Sales_Total | 0.092024 |

| Product_MRP | 0.036513 |

| Product_Weight | 0.017514 |

| Store_Establishment_Year | -0.758061 |

🔎 OBSERVATION:

Based on the above:

-

Product_Allocated_Area: Highly right-skewed (skew > 1) — could benefit from a log transformation to normalize.

-

Product_Store_Sales_Total (target): Nearly symmetric — no transformation required.

-

Product_MRP: Close to normal — transformation not needed.

-

Product_Weight: Symmetric — no transformation needed.

-

Store_Establishment_Year: Mild left skew — acceptable as-is, no transformation planned.

Data Preprocessing¶

-

Data Preprocessing Steps Checklist (recommended order)

-

Drop Irrelevant Columns (e.g. Product_Id, Store_Id — unique identifiers)

-

Split Features and Target

-

Train/Test Split (80/20 is typical)

-

One-Hot Encoding for Categorical Variables

-

Feature Scaling (for numerical features) (Optional, depending on model)

-

Pipeline Creation (ColumnTransformer, Pipeline, etc.)

-

Final Fit/Transform

-

Transform Product_Allocated_Area to adjust for skewness¶

- Product_Allocated_Area is highly right skewed - (skewness > 1)

- Drop original Product_Allocated_Area column to use Product_Allocated_Area_Log in my model.

# Log-transform 'Product_Allocated_Area' to reduce right skewness

# log1p is used instead of log to handle zero values safely

dataset['Product_Allocated_Area_Log'] = np.log1p(dataset['Product_Allocated_Area'])

# Reconstruct the original column from the log-transformed version because I made a mistake below.

# Recomment this out after I have it correct.

# dataset['Product_Allocated_Area'] = np.expm1(dataset['Product_Allocated_Area_Log'])

# Drop the new Product_Allocated_Area_Log column

#dataset.drop(columns=['Product_Allocated_Area_Log'], inplace=True)

# Drop the original skewed column

dataset.drop(columns=['Product_Allocated_Area'], inplace=True)

# Display remaining columns to confirm changes

print("Current columns in dataset:")

print(dataset.columns)

Current columns in dataset:

Index(['Product_Id', 'Product_Weight', 'Product_Sugar_Content', 'Product_Type',

'Product_MRP', 'Store_Id', 'Store_Establishment_Year', 'Store_Size',

'Store_Location_City_Type', 'Store_Type', 'Product_Store_Sales_Total',

'Product_Allocated_Area_Log'],

dtype='object')

🔎 OBSERVATION:

- I can see from the columns above, I have dropped the original Product_Allocated_Area column and now only have the log transformed column Product_Allocated_Area_Log.

Transform Product_Sugar_Content column¶

- I have already addressed this data issue above prior to EDA Analysis.

Address the high number of Product Types (16) to see if we can group them.¶

Creating two categories, perishables and non perishables, in order to reduce the number of product types to simplify one-hot encoding

- This should simplify our model

- Improve model generalization

- Reduce the number of dummy variables

- Reduces dimensionality

# Grouping Product_Type into Perishables vs. Non Perishables

perishable_types = [

'Dairy', 'Fruits and Vegetables', 'Meat', 'Breads', 'Seafood'

]

# Create a new column 'Product_Type_Category' with grouped values

dataset['Product_Type_Category'] = dataset['Product_Type'].apply(

lambda x: 'Perishables' if x in perishable_types else 'Non Perishables'

)

# Drop the original high-cardinality column

dataset.drop(columns=['Product_Type'], inplace=True)

# Confirm the transformation

print(dataset['Product_Type_Category'].value_counts())

Product_Type_Category Non Perishables 5824 Perishables 2939 Name: count, dtype: int64

🔎 OBSERVATION:

-

We grouped the original 16 Product_Type values into two broader categories — Perishables and Non Perishables. This was done to simplify one-hot encoding, reduce dimensionality, and support better model generalization. The new column Product_Type_Category will be used in modeling going forward.

-

We'll drop the original column Product_Type during Data Preparation below.

-

Since I reduced the number of product types here I'll go ahead and drop the

Product_Idcolumn since I no longer need to further reduce the types of products.

Data Preparation for Modeling¶

Our goal is to forecast the Product_Store_Sales_Total.

-

Before building the model, we'll drop unnecessary columns and encode the categorical features.

-

We'll then split the data into training and testing sets to evaluate the model's performance on unseen data.

# Re-Print the first 5 rows of the dataset

dataset.head()

| Product_Id | Product_Weight | Product_Sugar_Content | Product_MRP | Store_Id | Store_Establishment_Year | Store_Size | Store_Location_City_Type | Store_Type | Product_Store_Sales_Total | Product_Allocated_Area_Log | Product_Type_Category | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | FD6114 | 12.66 | Low Sugar | 117.08 | OUT004 | 2009 | Medium | Tier 2 | Supermarket Type2 | 2842.40 | 0.026642 | Non Perishables |

| 1 | FD7839 | 16.54 | Low Sugar | 171.43 | OUT003 | 1999 | Medium | Tier 1 | Departmental Store | 4830.02 | 0.134531 | Perishables |

| 2 | FD5075 | 14.28 | Regular | 162.08 | OUT001 | 1987 | High | Tier 2 | Supermarket Type1 | 4130.16 | 0.030529 | Non Perishables |

| 3 | FD8233 | 12.10 | Low Sugar | 186.31 | OUT001 | 1987 | High | Tier 2 | Supermarket Type1 | 4132.18 | 0.106160 | Non Perishables |

| 4 | NC1180 | 9.57 | No Sugar | 123.67 | OUT002 | 1998 | Small | Tier 3 | Food Mart | 2279.36 | 0.009950 | Non Perishables |

Drop Unnecessary Columns¶

Remove the columns that are not required.

-

'Product_Id', 'Store_Id' → unique identifiers. We already transformed our Product_Types into Product_Type_Category for perishables and non-perishables. No need to further break down Product_Types using the Product_Type "Prefix". We'll just drop this column completely.

-

'Product_Type' → replaced with 'Product_Type_Category'

-

'Store_Establishment_Year' → already analyzed and found not predictive

# Drop unnecessary columns (exclude 'Product_Type' - already dropped earlier)

data = dataset.drop(

["Product_Id", "Store_Id", "Store_Establishment_Year"],

axis=1,

errors='ignore' # ignores columns that aren't found

)

# Print the number of rows and columns

print("Shape of the data:", data.shape)

print("-" * 100) # Print a divider line

# Print the first 5 rows of the data

data.head()

Shape of the data: (8763, 9) ----------------------------------------------------------------------------------------------------

| Product_Weight | Product_Sugar_Content | Product_MRP | Store_Size | Store_Location_City_Type | Store_Type | Product_Store_Sales_Total | Product_Allocated_Area_Log | Product_Type_Category | |

|---|---|---|---|---|---|---|---|---|---|

| 0 | 12.66 | Low Sugar | 117.08 | Medium | Tier 2 | Supermarket Type2 | 2842.40 | 0.026642 | Non Perishables |

| 1 | 16.54 | Low Sugar | 171.43 | Medium | Tier 1 | Departmental Store | 4830.02 | 0.134531 | Perishables |

| 2 | 14.28 | Regular | 162.08 | High | Tier 2 | Supermarket Type1 | 4130.16 | 0.030529 | Non Perishables |

| 3 | 12.10 | Low Sugar | 186.31 | High | Tier 2 | Supermarket Type1 | 4132.18 | 0.106160 | Non Perishables |

| 4 | 9.57 | No Sugar | 123.67 | Small | Tier 3 | Food Mart | 2279.36 | 0.009950 | Non Perishables |

Separating Features and Target¶

# Separate features and target variable

X = data.drop("Product_Store_Sales_Total", axis=1) # Feature set

y = data["Product_Store_Sales_Total"] # Target variable

🔎 OBSERVATION:

- We separated the dataset into feature variables X and target variable y. The column Product_Store_Sales_Total is designated as the prediction target for our regression model. All other columns will be used as predictors.

Train-Test Split (80:20)¶

# Split the dataset into training and testing sets (80% train, 20% test)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42, shuffle=True

)

# Check the shape of the splits

print(f"X_train shape: {X_train.shape}")

print(f"X_test shape: {X_test.shape}")

print(f"y_train shape: {y_train.shape}")

print(f"y_test shape: {y_test.shape}")

X_train shape: (7010, 8) X_test shape: (1753, 8) y_train shape: (7010,) y_test shape: (1753,)

🔎 OBSERVATION:

- The dataset has been split into training and testing subsets using an 80:20 ratio. This ensures the model can be trained on the majority of data while being evaluated on a holdout set for unbiased performance measurement. The random_state ensures reproducibility of results.

- The target variable (y) is correctly split into training and testing sets with the same number of rows as X_train and X_test.

Data Pre-processing Pipeline¶

Step 1: Identify Categorical Features

# Automatically detect categorical features

categorical_features = data.select_dtypes(include=['object', 'category']).columns.tolist()

# Display detected categorical features

print("Categorical Features:", categorical_features)

Categorical Features: ['Product_Sugar_Content', 'Store_Size', 'Store_Location_City_Type', 'Store_Type', 'Product_Type_Category']

🔎 OBSERVATION:

- This step programmatically identifies all columns with type 'object' or 'category', which are typically non-numeric and need to be one-hot encoded before being used in machine learning models.

Step 2: Create Preprocessor for Categorical Encoding

# Define a preprocessor that applies OneHotEncoding to all categorical features

preprocessor = make_column_transformer(

(Pipeline([

('encoder', OneHotEncoder(handle_unknown='ignore', sparse_output=False))

]), categorical_features),

remainder='passthrough' # Leave all non-categorical columns (numerical) unchanged

)

🔎 OBSERVATION:

- We use make_column_transformer to apply a OneHotEncoder to the categorical columns while leaving numerical features untouched (remainder='passthrough'). The handle_unknown='ignore' ensures the model won’t crash on unseen categories during prediction.

Model Building¶

Define functions for Model Evaluation¶

- We'll fit different models on the train data and observe their performance.

- We'll try to improve that performance by tuning some hyperparameters available for that algorithm.

- We'll use GridSearchCv for hyperparameter tuning and

r_2 scoreto optimize the model.

Let's start by creating a function to get model scores, so that we don't have to use the same code repeatedly.

# function to compute adjusted R-squared

def adj_r2_score(predictors, targets, predictions):

r2 = r2_score(targets, predictions)

n = predictors.shape[0]

k = predictors.shape[1]

return 1 - ((1 - r2) * (n - 1) / (n - k - 1))

# function to compute different metrics to check performance of a regression model

def model_performance_regression(model, predictors, target):

"""

Function to compute different metrics to check regression model performance

model: regressor

predictors: independent variables

target: dependent variable

"""

# predicting using the independent variables

pred = model.predict(predictors)

r2 = r2_score(target, pred) # to compute R-squared

adjr2 = adj_r2_score(predictors, target, pred) # to compute adjusted R-squared

rmse = np.sqrt(mean_squared_error(target, pred)) # to compute RMSE

mae = mean_absolute_error(target, pred) # to compute MAE

mape = mean_absolute_percentage_error(target, pred) # to compute MAPE

# creating a dataframe of metrics

df_perf = pd.DataFrame(

{

"RMSE": rmse,

"MAE": mae,

"R-squared": r2,

"Adj. R-squared": adjr2,

"MAPE": mape,

},

index=[0],

)

return df_perf

Random Forest Regressor - Model Training Pipeline - Base Model¶

- Initialize the base Random Forest Regressormodel.

- This initializes a basic Random Forest with default parameters.

# Define base Random Forest model

rf_model = RandomForestRegressor(random_state=42)

Create a Pipeline with Preprocessing and Model¶

preprocessor: This includes our one-hot encoding pipeline defined earlier.- Combines data preprocessing and model training into a single object for workflow cleanliness and reproducibility.

# Create pipeline with preprocessing and Random Forest model

rf_pipeline = make_pipeline(preprocessor, rf_model)

Fit the Pipeline¶

- Trains the entire pipeline on training set — categorical encoding + random forest.

# Train the model pipeline on the training data

rf_pipeline.fit(X_train, y_train)

Pipeline(steps=[('columntransformer',

ColumnTransformer(remainder='passthrough',

transformers=[('pipeline',

Pipeline(steps=[('encoder',

OneHotEncoder(handle_unknown='ignore',

sparse_output=False))]),

['Product_Sugar_Content',

'Store_Size',

'Store_Location_City_Type',

'Store_Type',

'Product_Type_Category'])])),

('randomforestregressor',

RandomForestRegressor(random_state=42))])In a Jupyter environment, please rerun this cell to

show the HTML representation or trust the notebook. On GitHub, the HTML representation is unable to render, please try loading this page with nbviewer.org.

Pipeline(steps=[('columntransformer',

ColumnTransformer(remainder='passthrough',

transformers=[('pipeline',

Pipeline(steps=[('encoder',

OneHotEncoder(handle_unknown='ignore',

sparse_output=False))]),

['Product_Sugar_Content',

'Store_Size',

'Store_Location_City_Type',

'Store_Type',

'Product_Type_Category'])])),

('randomforestregressor',

RandomForestRegressor(random_state=42))])

ColumnTransformer(remainder='passthrough',

transformers=[('pipeline',

Pipeline(steps=[('encoder',

OneHotEncoder(handle_unknown='ignore',

sparse_output=False))]),

['Product_Sugar_Content', 'Store_Size',

'Store_Location_City_Type', 'Store_Type',

'Product_Type_Category'])])

['Product_Sugar_Content', 'Store_Size', 'Store_Location_City_Type', 'Store_Type', 'Product_Type_Category']

OneHotEncoder(handle_unknown='ignore', sparse_output=False)

['Product_Weight', 'Product_MRP', 'Product_Allocated_Area_Log']

passthrough

RandomForestRegressor(random_state=42)

Evaluate Model Performance on Training and Test¶

- this will give display a clean summary of how the model performs on both training and test data.

# Evaluate performance on training data

rf_estimator_model_train_perf = model_performance_regression(rf_pipeline, X_train,y_train)

print("Training performance \n")

rf_estimator_model_train_perf

Training performance

| RMSE | MAE | R-squared | Adj. R-squared | MAPE | |

|---|---|---|---|---|---|

| 0 | 106.523083 | 40.007379 | 0.989994 | 0.989983 | 0.014839 |

# Evaluate performance on test data

rf_estimator_model_test_perf = model_performance_regression(rf_pipeline, X_test,y_test)

print("Testing performance \n")

rf_estimator_model_test_perf

Testing performance

| RMSE | MAE | R-squared | Adj. R-squared | MAPE | |

|---|---|---|---|---|---|

| 0 | 284.781996 | 109.399474 | 0.928923 | 0.928597 | 0.039265 |

🔎 OBSERVATION:

-